DevOpsUse

Theoretical Basis

Over the last years, software development was mainly driven by an increased orientation towards customers. This has led to agile development practices and a faster deployment of produced software, especially in the area of innovative Web businesses [1]. It became evident that the developers’ goal of producing software fast contrasts with the operators’ need to keep the software running stable. Traditionally, companies separate the two departments “development” and “operations”, the former one producing code, the latter managing the often complex IT infrastructure. Scarce communication between these departments and strict separation of concerns have led to conflicts due to unclear accountabilities on system failures. The methodology called DevOps (a clipped compound of “development” and “operations”) therefore tries to establish a new culture between these departments by a tighter integration of software development and deployment in organizations. The term DevOps comprises not only the methodology, but a mindset of working towards the same goal, and last but not least a collection of software tools that support this collaboration culture.

Automation is essential for DevOps. It is employed at several layers from development over delivery to the deployment of software. In development, refactoring stands for changes of the code with the goal to preserve functionality while improving code structure, readability, and reusability. Today, many Integrated Development Environments (IDEs) automate refactoring operations like renaming variables or externalizing code blocks into own modules. Organization-wide agreed code style guidelines can be checked automatically before uploading code from a development workstation to a shared source code repository. Finally, software can be automatically tested for bugs on integration servers, and released automatically to app stores for seamless distribution to end user devices.

DevOps reduces the time a software needs to reach the end users (i.e., time to market) and enhances communication and collaboration between software developers and IT operations personnel. The methodology leads to a “one team” approach where programmers, testers, and system administrators are involved in the rapid and agile software development cycle. Therefore it maximizes the predictability, efficiency, security and maintainability of operational processes [2].

DevOpsUse

While DevOps acknowledges customer demands by faster release cycles based on an agile software development methodology, what is not covered by the term is the constant involvement of end users in the development process. Therefore, in the second year of the project we came up with the term DevOpsUse, a clipped compound of “developers”, “operators” and “(end) users”.

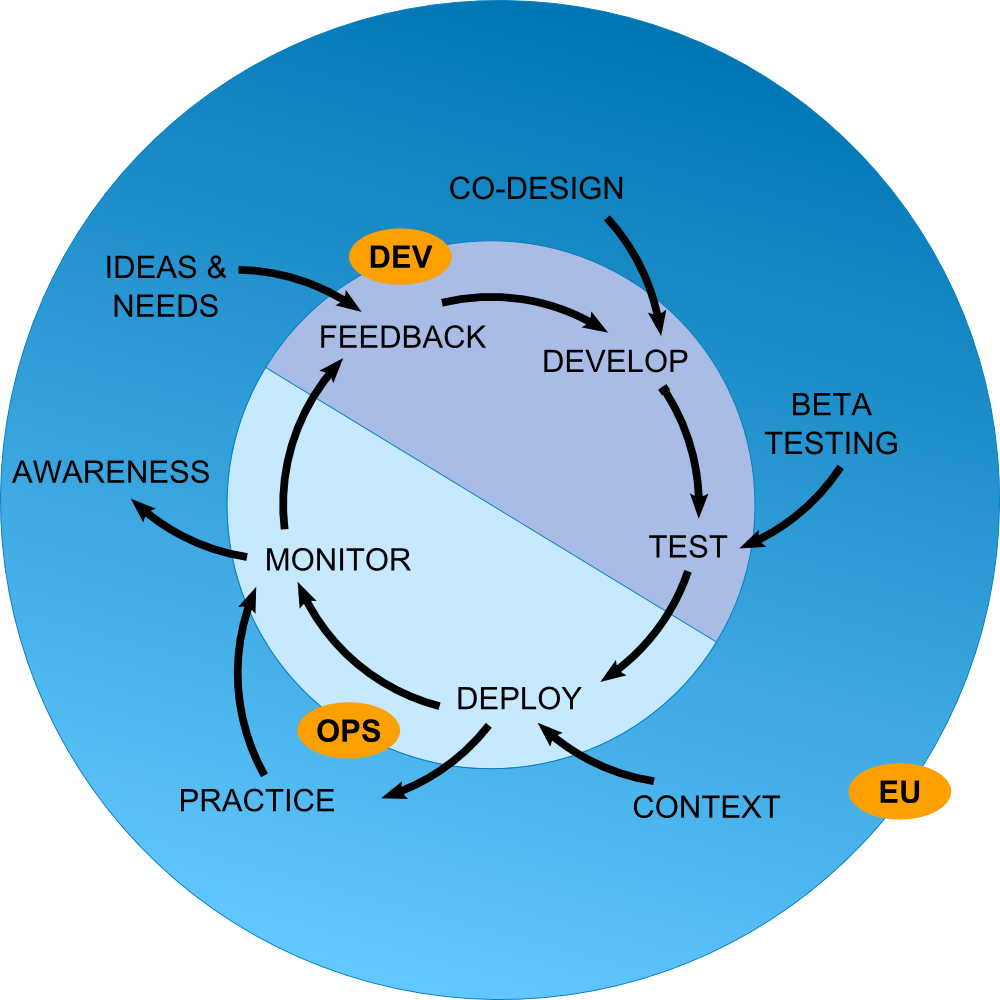

Figure 1 - DevOpsUse Life Cycle

The DevOpsUse life cycle model as shown in Figure 1 is based on a common DevOps life cycle model represented by the inner circle (http://newrelic.com/devops/lifecycle). However, the existing model does not include any end-user participation. Following our argumentation of end-user importance for Open Source Software Development (OSSD), we added the outer circle to illustrate at which steps in the standard DevOps life cycle end users have significant impact. Particularly, in early phases of OSSD, end users are valuable sources of needs and ideas.

During the development process, end users participate in co-designing and alpha/beta testing the Open Source Software (OSS) before an official release. Deployment is partially guided by end-user requirements with respect to their contexts. In particular, end users help decide in which premises an OSS is deployed (i.e. public cloud data centers, organizational cloud installations, private cloudlets, or hybrid forms; for an overview cf. [3]).

Once deployed, end users carry out their practice by using the OSS. This is producing additional usage data, captured by monitoring and made available to the whole community, to create awareness for further input of ideas and needs. Being aware of the current status, end users provide valuable feedback in addition to the information resulting from monitoring. This feedback should be both retrospective (e.g. by the completion of surveys on OSS quality and impact) and prospective (e.g. by sharing new needs and ideas as part of Social Requirements Engineering (SRE) [4]).

DevOpsUse in Learning Layers

In the following, we present examples of DevOpsUse tools in action, with two tools developed within the scope of the project.

Requirement Analysis

Software architectures are built based on functional and non-functional requirements. In Layers, the user requirements were elicited at the start of the project as a first step, through various activities from end-user partners in the two Layers application clusters, healthcare, and construction. Representants of these application clusters worked together in design teams, as established in the first design conference in Helsinki in 2013. The design teams were creating and working on use cases, storyboards, and basic interactions, using wireframes and different prototypes.

Requirements Bazaar

The Requirements Bazaar is a browser-based social software platform for Social Requirements Engineering, addressing the challenge of a feedback cycle between users and developers in a social networking manner. A public installation is available on https://requirements-bazaar.org. Stakeholders from diverse Communities of Practice (CoPs) are brought together with service providers into an open and traceable process of collaborative requirements elicitation, negotiation, prioritization, and realization. A vital communication between all stakeholders of an open source project is essential in this regard [5][6]. The Bazaar aims at supporting all stakeholders in reaching their particular goals with a common base: CoPs in expressing their special needs and negotiating realizations in an intuitive, community-aware manner; service providers in prioritizing requirements realizations for maximized impact.

The Requirements Bazaar was used in the requirements engineering step for a collective voting process, to achieve a ranking of the elicited functional requirements as an input for the quality function deployment (see next section). Partners were asked to express their opinion through casting a vote on the most important requirements. The vote consisted of a like on a particular requirement. The voting options available for each requirement were “like”, no action, and “dislike”. Through this collective process, all the existing requirements were rated, enabling the prioritization of requirements. The ranking was constructed by sorting the requirements list according to the scores obtained after the voting procedure. The most popular requirement (i.e. Activity Tracker) received a total number of 16 positive votes, while the last requirement in the hierarchy was listed with only two votes.

House of Quality

The Layers architecture was constructed to be able to integrate components into the overall framework continuously. While doing this, controlled architectural decisions need to be taken, which are informed by the actual needs of end users: both functional and non-functional. Therefore, we chose to deploy a well-established methodology that allowed us to map technical features, offered by new and existing components, with end-user requirements in the context of use. The general methodology we chose is called Quality Function Deployment (QFD) [7], and the particular instrument we adopted to map features and requirements is called House of Quality (HoQ). In the year 2013, we developed a Web-based collaborative prototype for building HoQs and made it available publicly on the Chrome app store. It was used in the early phase of Layers to prioritize the functional and non-functional requirements of the applications envisioned in the design teams.

Examples of Application in Learning Layers

Besides the tools introduced in the last sections, the most notable application of DevOpsUse practices was the establishment of the Layers Developer Task Force (LDTF), a community of developers across partners, consisting e.g. of professional developers and researchers doing development. This body met biweekly to discuss ongoing development-related topics. Over the course of the project, LDTF representatives were also called to co-design sessions to advise design teams on the feasibility of design ideas. By these activities, LDTF members completed the DevOpsUse life cycle towards end users by synchronizing and discussing issues with co-design teams, exchanging documentation and APIs, and in supporting initial compatibility and integration of different prototypes.

Glossary

-

API Application Programming Interface

-

DevOps Development and Operations

-

OSS Open-Source Software

-

OSSD Open Source Software Development

-

SRE Social Requirements Engineering

-

CoP Community of Practice

-

QFD Quality Function Deployment

Contributing Authors

References

- J. Humble and D. Farley, Continuous Delivery: Reliable Software Releases through Build, Test, and Deployment Automation, 2. Print. Upper Saddle River, NJ: Addison-Wesley, 2011.

- M. Hüttermann, DevOps for Developers: The expert’s voice in Web development. Berkeley, CA, USA: Apress, 2012. DOI: 10.1007/978-1-4302-4570-4

- P. Mell and T. Grance, “The NIST Definition of Cloud Computing,” vol. 800-145, 2011.

- E. L.-C. Law, A. Chatterjee, D. Renzel, and R. Klamma, “The Social Requirements Engineering (SRE) Approach to Developing a Large-Scale Personal Learning Environment Infrastructure,” in 21st Century Learning for 21st Century Skills, vol. 7563, A. Ravenscroft, S. Lindstaedt, C. D. Kloos, and D. Hernández-Leo, Eds. Berlin/Heidelberg, Germany: Springer, 2012, pp. 194–207. DOI: 10.1007/978-3-642-33263-0_16

- A. Hannemann, R. Klamma, and M. Jarke, “Soziale Interaktion in Open-Source-Communitys,” HMD Praxis der Wirtschaftsinformatik, vol. 49, no. 1, pp. 26–37, 2012. DOI: 10.1007/BF03340660

- A. Hannemann and R. Klamma, “Community Dynamics in Open Source Software Projects: Aging and Social Reshaping,” in Open Source Software: Quality Verification, vol. 404, E. Petrinja, G. Succi, N. El Ioini, and A. Sillitti, Eds. Berlin, Heidelberg: Springer Berlin Heidelberg, 2013, pp. 80–96. DOI: 10.1007/978-3-642-38928-3_6

- Webducate.net, “Quality Function Deployment.” [Online]. Available at: http://www.webducate.net/qfd/qfd.html